Fields plowing is performed by a robot having the shape of driverless tractor. Variety of sensors as GPS, Lidar and cameras are replacing the driver. Basically, it is GPS technology, guided with predefined path planning. Machine vision is in charge of the navigation task, in particular of obstacle avoidance. Using Lidar, a 3D modeling of the environment around the robotic tractor is obtained. Cameras provide a corresponding video stream of this environment. Machine vision’s first task is to perform a fusion of the sensor’s data. Once synchronized, the obstacles are identified and an action (like bypassing the obstacles) is taken. Classic recognition algorithms may be used here, working with the image (regular cameras) and its dimensions (Lidar). However, their performance is closely related to what they were programmed for. Variations in intensity, color, shape and dimensions might badly disturb the performance – that means miss identifying the obstacles. Today we use deep learning to identify the objects the position of which is in a collision path. Correctly trained and with a broad range of tagged examples, a deep learning classifier can provide a highly accurate navigation.Seeding is much the same as plowing. The added challenge here is to tightly follow the rows and implant the seeds in their center. The navigation here is deep learning based, allowing to handle any light condition to identify the rows.

Robots using Machine Vision for weeds handling

Weeds handling is performed using a self-driven robotic platform. The challenge is to maneuver between crops, identity and classify weeds as well as handle them by spraying them or picking them out. This robot, usually smaller than the plowing and seeding robots, has both autonomous driving capabilities, in addition to camera-controlled, multi-joint arms. Automatic navigation of these robots uses deep learning trained CNN (Convolutional Neural Networks). This classifier drives the robot throughout the gaps between the planned rows. Additional CNN classifier, using the camera feed recognizes crops and classifies them as weeds. An additional camera, placed on the robot’s multi-joint arm, assists the location of the arm’s tool for better weeds handling. The machine vision algorithms here use first calibration and registration procedures to make sure that all works on the same coordinates system, so that data handover is simple.

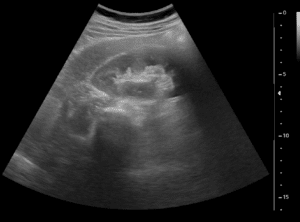

Produce growth monitoring can be performed by a ground-based robot or by a flying robotic UAV. It is the ground-based robot that best matches fruits and vegetables. Flying UAV, using autonomous navigation, are best for cotton, soybeans and similar. The ground-based robot uses the same autonomous driving and navigation capabilities as explained before. It is equipped with scanning cameras providing video stream of the crops. Machine vision algorithms, on their side, use deep learning classifier to recognize and measure fruits and vegetables. Such CNN algorithms are the best technology available today to maintain a classification performance even in presence of intensity, color and geometric variations. An automatic navigation UAV robot is able to avoid obstacles, by classifying objects, potentially blocking the flying path. Their pod uses multi spectrum cameras which assist in producing the vegetation index – NDVI (Normalized Difference Vegetation Index).

Machine Vision algorithms

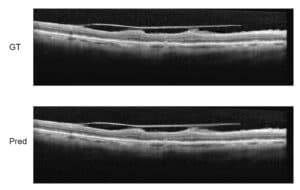

Fruits and vegetables picking is a complex process requiring a set of machine vision algorithms which controls a high DOF (degrees of freedom) robotic structure. This robot may be human-like, having mobility capabilities while manipulating its arm(s) to the location of fruits. As said, machine vision algorithms should be fully “synchronized”. For example, the navigation and the localization algorithms should update the machine vision algorithms to adjust their reference system in accordance to the robot movements. The machine vision’s deep learning based autonomous driving function is in charge of moving between the rows. The robot’s arm camera actually uses two cameras: one scans the tree to detect and classify fruits, while the other guides the arm’s tool to the best picking location and orientation. Both activities, navigating and detecting the fruits, are perfected by the Machine vision’s CNN capabilities. The fruit classification function can be mentioned as having a high sorting accuracy, even while partly occluded and with harsh light conditions.

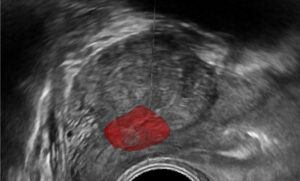

Sorting and grading robots using Deep Learning

Sorting and grading robots work as arms with cameras mounting over conveyors. Using their camera, they get a fast and accurate classification of fruits. Their deep learning algorithms can identify defects from any angle with large color and geometric variation (provided that a proper training was previously performed). The algorithms are set to perform the first object detection to locate the fruits and, after that, the classification. RSIP Vision has a very large experience in precise agriculture and world-class proficiency in deep learning algorithms and CNN’s. Consult our experts to see how best apply the best robotics technology to your project.