Classically, object tracking algorithms start with a known object to track (contained within a bounding box); the algorithm then computes features in that bounding box, which are associated with the object to track, and continues to find the most probable bounding box in the next frame within which features are as close as possible to those appearing in the previous frame. The search algorithm advances by looks for bounding box in frame t+1 after applying an affine transformation of that in the previous frame t. Such an approach is highlighted in the algorithm of Lucas, Kanade and Tomasi.

Several drawbacks with the above classical approach are encountered. Firstly, bounding boxes of non-convex object might contain a large portion of the background, which might be complex and the features extracted from it might confuse matching for the next frame. Secondly, feature matching algorithms can easily lose track of the main object when occluded (e.g., hand passing over face). Finally, feature computation and matching for object undergoing small continuous motion forms a bottleneck for the speed at which objects can be tracked in real-time and can be as slow as 0.8-10 fps.

To resolve some of the difficulties of classic tracking algorithms, deep convolutional networks have been offered as a possible solution. These off-line trained networks provide means of overcoming occlusion, based on the knowledge of continuity learned in the training phase. In addition, the network can handle a variety of object types (convex and non-convex), which improves their overall usability over classical solutions.

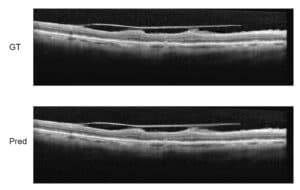

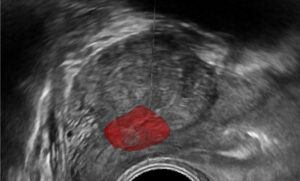

The output of such trained network are the coordinates of a bounding box containing the object to track in each frame of the video sequence. A notable network architecture to perform tracking tasks contains an input layer with two nodes corresponding to two subsequent frames: one annotated and centered on the object to track, and the second for the frame within which the bounding box is to be localized. Frame information is passed to convolutional (filter) layers, where feature values are concatenated and further passed through three fully connected layers; finally, information is passed to four node outputs representing the four corners of the bounding box containing the image in the next frame.

Due to the nature of offline training of the network, object tracking can be performed on a surprising 100 fps on a modern GPU, and about 20 fps on a CPU. The construction and training of the CNN for tracking requires careful planning and execution. Our experts at RSIP Vision have been dealing for many years with state-of-the-art algorithms for object tracking in the most severe conditions in natural videos, such as low dose X-ray, cloudy road conditions and security cameras. We at RSIP Vision are committed to provide the highest quality tailor-made algorithms for our clients’ needs, with unparalleled accuracy and reproducibility. To learn more about RSIP Vision’s activities in a large variety of domains, please visit our project page. To learn how RSIP Vision can contribute to advancing your project, please contact us and consult our experts.