Augmented reality (AR), the superposition of virtual graphical models on screen, has been steadily progressing and finds its application in diverse domain from recreational to clinical. Traditionally, a virtual 3D model is placed in a scene acquired by camera based on several landmark points appearing in the scene. Doing so, graphics can be overlaid on outdoor scenes so that a player can interact with them, information can be displayed regarding the landscape seen through the camera lens, virtual houses can be erected on top of architectural blueprints, models of organs can appear on screen for surgeons in operating rooms and much more.

Since the beginning of AR, about 20 years ago, much progress has been made to the point that objects appearing in the scene can be used as landmark points which form a basis, on top of which the virtual model is constructed. Such landmark points were mainly static and only the camera motion was considered. However, for real life applications, landmark points can alter their position from frame to frame. When no specific pre-determined symbol is present in the scene, the search and tracking module need to rely on other, unpredictable types. Such points are naturally taken as features of the frame image. Feature like SURF and Harris corners (or a combination of them) are popular first choices for this object feature tracking process.

Feature points tracked throughout the sequence are matched for correspondence between consecutive frames. The affine transformation between the clouds of feature points in two consecutive frames is deduced using robust registration techniques. Among them, the RANSAC and its variants enjoy a prominent stand, as they evaluate the parameters of the affine transformations of many random sample points of the two clouds and output those which are agreed upon by the majority (consensus).

As with many tracking procedures, tricks and patches can be applied to object feature tracking in order to increase the importance of inlying points while reducing sensitivity to outliers. For example, new points can be added or ruled out from tracking by a devised feature quality measure; models of motion can be incorporated to restrict sharp non probable transformations, in line with the model’s limitations; search regions for matching can be reduced by using predictive motion models like the Kalman filter, etc.

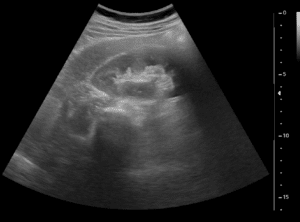

For augmented reality applications, not only does the base upon which the virtual model is constructed move, but most frequently the camera moves too. In many hardware pieces used as a screen for Augmented Reality (like cellphones), motion sensors can give indication of the relative transposition, skew and rotation from one time point to another. Although such positional information is important for calibration, in the case of moving capturing device, the camera and the object relative motion need to be de-convolved. In the case of static camera, e.g. for Augmented Reality in surgery, one needs to consider mainly object transformation. Augmented reality models operating in relatively sterile environment, like clinical applications, make life much easier for algorithm developers for several obvious reasons: sharp motions are not expected, environment lightning conditions is mainly constant and relatively very few objects appear in the scene. Markers are placed around the patient’s bed to support the virtual model’s positioning.

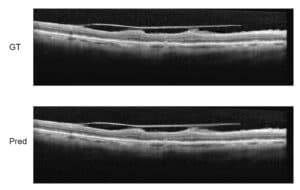

The set of feature points tracked forms the basis on which the virtual model is constructed. Stabilized basis model (chosen according to the nature of the application) needs to translate and deform in accordance with the tracked object. Stabilization is thus important for both objectives: the sense of realism in AR for recreational purposes and the accuracy needed in AR for surgery.

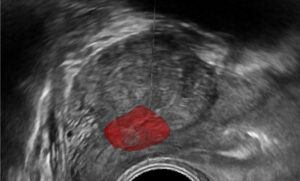

If the virtual model constructed on the basis of the tracked point model is to interact with other objects in the scene, depth information needs to be recovered as well. The latter is completely non-trivial in natural outdoor environments but can be solved in controlled environment. Such is the case with virtual Augmented Reality models interacting with robotic arms or surgery equipment, which can be tracked with excellent accuracy after the system has been calibrated.

Many are the challenges faced in computer vision applications of Augmented Reality in both recreational and industrial applications. With the maturity of several tracking algorithms and the increased computational power available for real-time applications, solutions are readily available for satisfactory operable use. To date, there is no single off-the-shelf platform for use in AR, therefore algorithms have to be tailor-made for the application at hand. At RSIP Vision, we have been tailoring cutting-edge algorithmic solutions for the past 25 years. Our novel algorithms operate with success spanning nearly all domains of modern industry.

Feature points tracked throughout the sequence are matched for correspondence between consecutive frames. The affine transformation between the clouds of feature points in two consecutive frames is deduced using robust registration techniques. Among them, the RANSAC and its variants enjoy a prominent stand, as they evaluate the parameters of the affine transformations of many random sample points of the two clouds and output those which are agreed upon by the majority (consensus).

As with many tracking procedures, tricks and patches can be applied to object feature tracking in order to increase the importance of inlying points while reducing sensitivity to outliers. For example, new points can be added or ruled out from tracking by a devised feature quality measure; models of motion can be incorporated to restrict sharp non probable transformations, in line with the model’s limitations; search regions for matching can be reduced by using predictive motion models like the Kalman filter, etc.

For augmented reality applications, not only does the base upon which the virtual model is constructed move, but most frequently the camera moves too. In many hardware pieces used as a screen for Augmented Reality (like cellphones), motion sensors can give indication of the relative transposition, skew and rotation from one time point to another. Although such positional information is important for calibration, in the case of moving capturing device, the camera and the object relative motion need to be de-convolved. In the case of static camera, e.g. for Augmented Reality in surgery, one needs to consider mainly object transformation. Augmented reality models operating in relatively sterile environment, like clinical applications, make life much easier for algorithm developers for several obvious reasons: sharp motions are not expected, environment lightning conditions is mainly constant and relatively very few objects appear in the scene. Markers are placed around the patient’s bed to support the virtual model’s positioning.

The set of feature points tracked forms the basis on which the virtual model is constructed. Stabilized basis model (chosen according to the nature of the application) needs to translate and deform in accordance with the tracked object. Stabilization is thus important for both objectives: the sense of realism in AR for recreational purposes and the accuracy needed in AR for surgery.

If the virtual model constructed on the basis of the tracked point model is to interact with other objects in the scene, depth information needs to be recovered as well. The latter is completely non-trivial in natural outdoor environments but can be solved in controlled environment. Such is the case with virtual Augmented Reality models interacting with robotic arms or surgery equipment, which can be tracked with excellent accuracy after the system has been calibrated.

Many are the challenges faced in computer vision applications of Augmented Reality in both recreational and industrial applications. With the maturity of several tracking algorithms and the increased computational power available for real-time applications, solutions are readily available for satisfactory operable use. To date, there is no single off-the-shelf platform for use in AR, therefore algorithms have to be tailor-made for the application at hand. At RSIP Vision, we have been tailoring cutting-edge algorithmic solutions for the past 25 years. Our novel algorithms operate with success spanning nearly all domains of modern industry.