Summary: Indoor Scene Structure Analysis for Single Image Depth Estimation

This is the first of our series of summaries of interesting texts on computer vision. Here, we explore Wei Zhuo, Mathieu Salzmann, Xuming He and Miaomiao Liu’s article on indoor scene structure analysis, published in the 2015 Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition.

A single two-dimensional image lacks depth information. It therefore raises the question, can depth be estimated by analyzing the scene’s structure, relationship between objects, and light? Without any prior information, this estimation is highly ambiguous. However, Zhuo et. al from the Australian National University in Canberra, Australia tackle this head on, without incorporating additional scene knowledge.

Human analysis of scenes relies heavily on our daily experience of moving through spaces. We recognize known objects and measure their relative position and depth in a two dimensional scenes in an empirical manner. To the authors, this suggested that learning from existing image-depth pairs will enable us to construct algorithms to estimate depth in a single image, similar to the way humans do.

Although there exist many algorithms that can estimate the depth of a scene, the authors point out that the major drawback of these is they perform local analysis of each pixel individually and then incorporate higher-level information to link these pixels into depth maps. For the authors, this methodology is counter-intuitive, since higher-level details are grasped before higher resolution local analysis is undertaken by the human vision system.

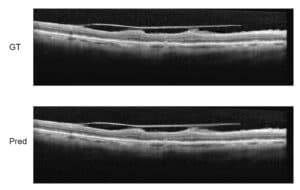

As a consequence, the authors propose to exploit high-level structures for single image depth estimation. By constructing a hierarchical representation of low, mid, high and global information, the algorithm proposed gives absolute depth information while retaining global structure. Three layers of information are constructed: super-pixels, regional, and layout. The depth estimation is modeled with a conditional Markov Random field which encodes the relationship between and within the different layers. Depth estimation in the local range is obtained through training with images from image datasets similar to the one tested. Regional or mid-range information is acquired by segmentation of the training images, and high-level information is obtained by modeling the scene as a box in 3D (a room or other spaces) and then assigning each pixel a probability in relation to its position in relation to each side of the box. The information collected from all layers is integrated into an equation, which after minimization, provides the depth map.

The method was tested on two publicly available depth datasets and was compared to three state-of-the-art algorithms for depth estimation. In terms of depth accuracy, the algorithm offered by the authors outperformed two out of three of the compared algorithms.

Reference:

Zhuo et al. “Indoor Scene Structure Analysis for Single Image Depth Estimation.” Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2015.

Image: "Städtische Bücherei Radstadt - book tower detail" by Herzi Pinki - Own work. Licensed under CC BY-SA 4.0 via Wikimedia Commons.