This article was first published on Computer Vision News of October 2021.

Étienne Léger recently completed his PhD at Concordia University under the supervision of Marta Kersten-Oertel. His research interest lies in Human Computer Interaction, evaluating how new methods can potentially improve neurosurgical workflows.

His research focused on developing and assessing neurosurgical guidance tools making use of novel paradigms, methods and hardware to make it more intuitive and interactive. He believes that through new hardware integration, neurosurgical guidance can be made more accessible, which can lead to improved patient outcomes. Congrats, Doctor Étienne!

It is estimated that 13.8 million patients per year require neurosurgical interventions worldwide, be it for a cerebrovascular disease, stroke, tumour resection, or epilepsy treatment, among others. These procedures involve navigating through and around complex anatomy in an organ where damage to eloquent healthy tissue must be

minimized. Neurosurgery thus has very specific constraints compared to most other domains of surgical care.

These constraints have made neurosurgery particularly suitable for integrating new technologies. Any new method that has the potential to improve surgical outcomes is worth pursuing, as it has the potential to not only save and prolong lives of patients, but also increase the quality of life post-treatment.

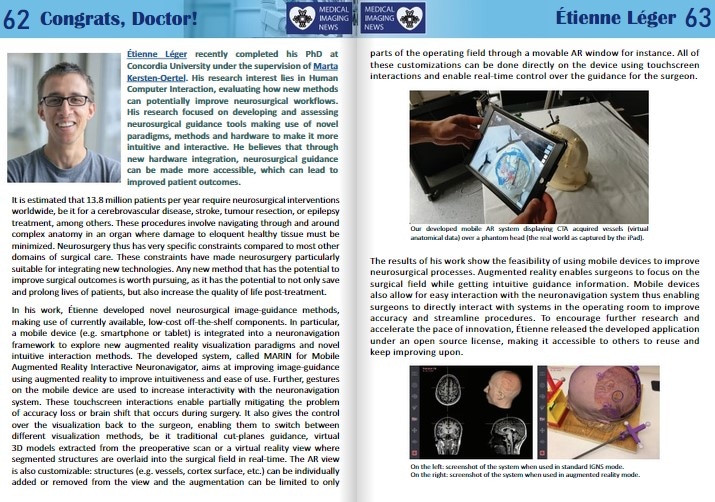

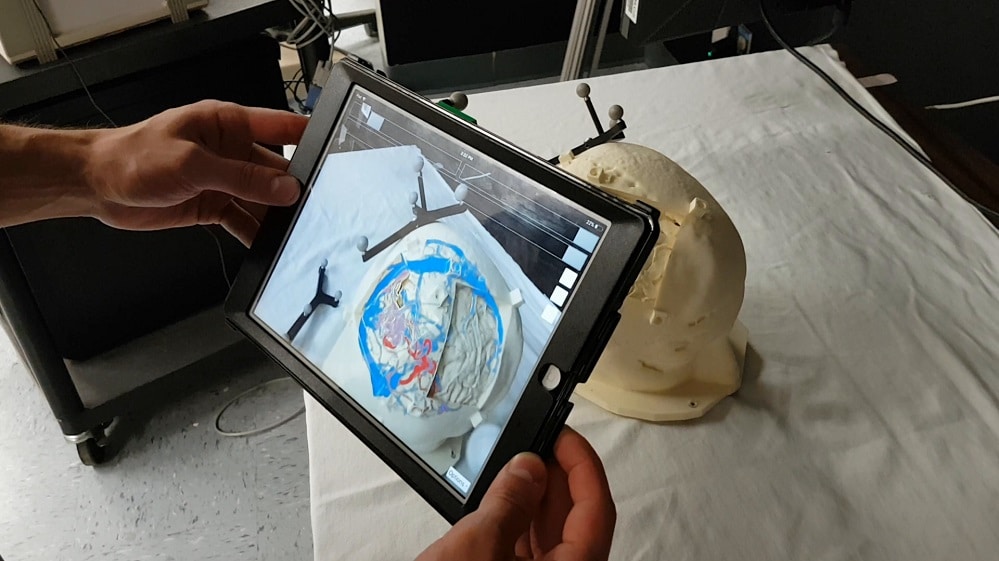

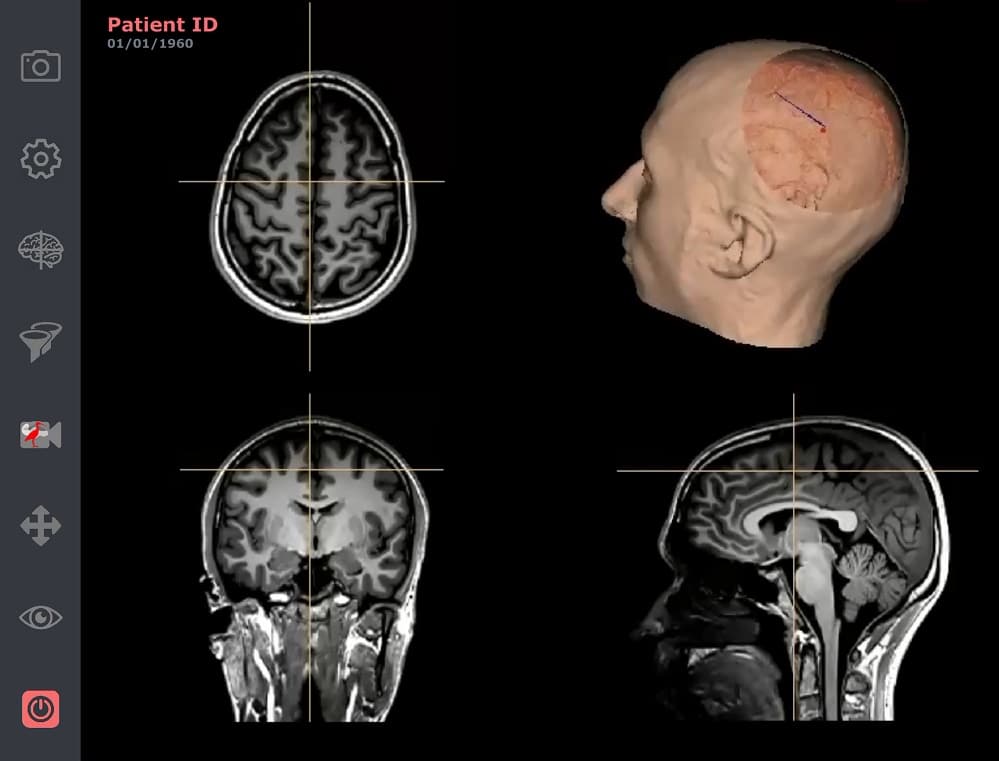

In his work, Étienne developed novel neurosurgical image-guidance methods, making use of currently available, low-cost off-the-shelf components. In particular, a mobile device (e.g. smartphone or tablet) is integrated into a neuronavigation framework to explore new augmented reality visualization paradigms and novel intuitive interaction methods. The developed system, called MARIN for Mobile Augmented Reality Interactive Neuronavigator, aims at improving image-guidance using augmented reality to improve intuitiveness and ease of use. Further, gestures on the mobile device are used to increase interactivity with the neuronavigation system. These touchscreen interactions enable partially mitigating the problem of accuracy loss or brain shift that occurs during surgery. It also gives the control over the visualization back to the surgeon, enabling them to switch between different visualization methods, be it traditional cut-planes guidance, virtual 3D models extracted from the preoperative scan or a virtual reality view where segmented structures are overlaid into the surgical field in real-time. The AR view is also customizable: structures (e.g. vessels, cortex surface, etc.) can be individually added or removed from the view and the augmentation can be limited to only parts of the operating field through a movable AR window for instance. All of these customizations can be done directly on the device using touchscreen interactions and enable real-time control over the guidance for the surgeon.

anatomical data) over a phantom head (the real world as captured by the iPad).

The results of his work show the feasibility of using mobile devices to improve neurosurgical processes. Augmented reality enables surgeons to focus on the surgical field while getting intuitive guidance information. Mobile devices also allow for easy interaction with the neuronavigation system thus enabling surgeons to directly interact with systems in the operating room to improve accuracy and streamline procedures. To encourage further research and accelerate the pace of innovation, Étienne released the developed application under an open source license, making it accessible to others to reuse and keep improving upon.

Keep reading the Medical Imaging News section of our magazine.

Read about RSIP Vision’s R&D work on Medical Image Analysis.