The automated detection of defects works by comparing a template gold standard product template with those in manufacturing process and detect unreasonable deviations from it.

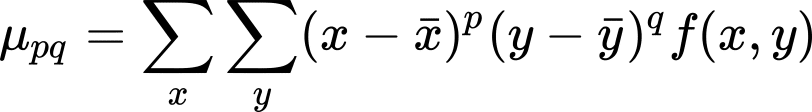

For the production of well-defined patterned products such as PCBs, pattern matching algorithms can be used to estimate deviation. However, defects in other products, such as fruits and flowers, might be less obvious to both define and detect. When a large variability in both defects and product shape are present, statistical methods, such as those offered by deep learning algorithms, are best suited for the job.

In analogy to a human inspector, whose reasoning is made on per-product based, machine learning algorithms are taught to distinguish defects in a certain acceptable range, based on the characteristics of features and their descriptors extracted from the product under inspection.

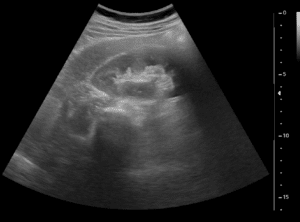

The actual deep learning architecture (number of layers and node connectivity) might differ according to the complexity of the problem. However, U-Nets architecture is a plausible and promising possibility. U-Nets are fully connected convolutional neural networks (CNN), where images undergo a sequence of down sampling and simultaneous computation of features in each scale, followed by a sequence of up sampling to retrieve eventual classified (segmented or annotated) output image.

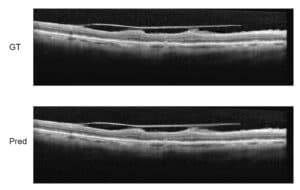

Due to the architecture of the convolutional networks and the oftentimes unpredictable orientation and geometry of products on the conveyor belt, features characterizing each inspected product must be scale and rotation invariant. Several image features exist for such cases, such as the Harris corners, SIFT, and so on. In addition, features related to the texture of products, as in the case of fabric or ceramic defect inspection, are called for. For this end, central image monomers (also called Hu moments) can be used. For any image f(x,y), the Hu moments of order p+q are defined as:

where p and q are integers. These moments uniquely characterize each image, they are invariant to translations and are computationally cheap to compute. By using Hu centralized monomers, additional features in overlapping regions of the image at various scales can be extracted and fed into the classifier or grading algorithm to improve the accuracy in the automated defect inspection process by RSIP Vision.

Deep learning architectures employed for automated inspection are expected to reach almost all domains of productions. Soft classifiers for classifying defects, such as those offered by machine learning, are particularly adequate for those cases where large variability in sensory information used for inspection, grading and sorting is present. However, the use of the right features has a major impact on the success and accuracy of all classifiers and should be tailor used on the basis of each product separately, according to the margin of acceptable defects.

At RSIP Vision we have been developing cutting edge image processing, computer vision and machine learning algorithms for several decades. We construct tailor-made solutions for our clients around the world, utilizing the expertise of expert engineers, dedicated to deliver high standards of quality. To learn more about RSIP Vision’s activity, please visit our latest projects page.