Artificial intelligence platforms using deep learning algorithms have made remarkable progress in general medical imaging but their clinical use in cases of upper gastrointestinal cancer to date has been limited.

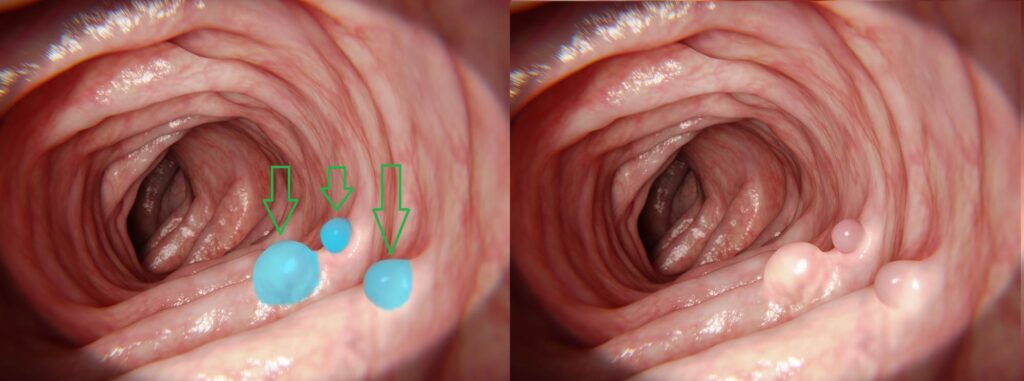

Screening for cancers of this type poses significant challenges. Images acquired by endoscopic cameras can suffer from poor image quality and consistency. They can be noisy, due to difficult lighting conditions and low resolution, which makes it difficult to view the lesions and polyps.

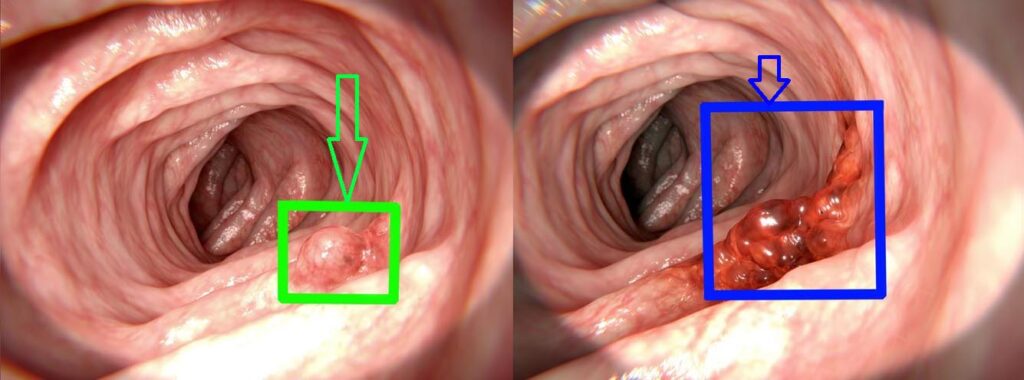

The wide variation in the appearance of suspected lesions and polyps can also prove challenging, including the shape, color, texture, size and irregularity.

The movement of the endoscope itself can result in an interrupted image flow and lead to parts of the scan being missing.

When performed manually, effective clinical interpretation of the scans to detect suspicious lesions and polyps is highly dependent on the reader’s skill and experience.

Training of AI for gastric cancer detection

To train a deep learning-based system, a large database of annotated endoscopic images is required, containing many examples for each occurrence of cancer. This enables the system to perform at the same level as an experienced endoscopist.

Several technological approaches can be used and will be chosen according to factors such as run time constraints, hardware limitations, and data availability.

A common approach using CNN-based architectures is the Single Shot MultiBox Detector (SSD). This approach is optimal for a high image rate application as it uses only a single frame to perform the detection, as opposed to R-CNN which requires two frames for the same task. The faster operation derived from the lower per frame processing time, is more suitable for endoscopic videos.

Another method involves using VGG16 and VGG19 deep CNN architectures. These are used in some models for feature extraction. The VGG19, with slightly deeper architecture, shows even better results due to its extra layers. Some experiments use narrow band images in addition to regular RGB images, which show improved polyp detection because specific color tones increase the polyp’s contrast relative to its background.

Using additional information, such as geometric properties, polyp context, temporal data, and environment information, can improve detection in situations where, for example, there is limited texture availability on the surface of the polyp.

The SSD approach was evaluated with three different feature extractors: ResNet50, VGG16, and InceptionV3. InceptionV3 obtained the most precise results. It also processed faster than two-stage methods, such as Faster R-CNN. Its structure enabled the network to get features covering different sizes and shapes of polyps by using different convolutional kernel sizes in parallel and reducing the huge variation in size and shape. The mean average precision (mAP) improved from 90% to 95%. This improvement included small polyps, which are more challenging to detect.

Results show an accuracy reaching over 90-95% in various applications, with a sensitivity of 97% and specificity of 98%. Compared with manual review, sensitivity for screening indication is 99% (with a 95% confidence interval: 95.3%–100%) and the area under the receiver operating characteristic (ROC) curve is 0.991. It is clear that using AI-driven applications for early detection of gastric cancer results in higher accuracy and consistency levels. Another benefit of these deep learning-based solutions is keeping false rate to a minimum. Further fine-tuning of solutions and the use of larger training sets will increase the detection accuracy to safely handle rare cases and pathologies.

References

[1] Gregor Urban, Priyam Tripathi, Talal Alkayali, Mohit Mittal, Farid Jalali, William Karnes, Pierre Bald. Deep Learning Localizes and Identifies Polyps in Real Time With 96% Accuracy in Screening Colonoscopy. Gastrojournal, October 2018 Volume 155, Issue 4, Pages 1069–1078.

[2] Xu Zhang, Fei Chen, Tao Yu,Jiye An, Zhengxing Huang, Jiquan Liu, Weiling Hu, Liangjing Wang, Huilong Duan, Jianmin Si. Real-time gastric polyp detection using convolutional neural networks. Plos one, March 25, 2019

[3] Rafid Mostafiz, Mosaddik Hasan, Imran Hossain, Mohammad M. Rahman. An intelligent system for gastrointestinal polyp detection in endoscopic video using fusion of bidimensional empirical mode decomposition and convolutional neural network features. Wiley online library, 06 June 2019

Gastro

Gastro