Fringe pattern projection for 3D object reconstruction has been around for 3 decades. The method finds its application in reconstructing static and dynamic objects in diverse fields such as biomedical, dentistry, circuit board inspection, virtual reality, vibration analysis, recreational 3D light spectacles and many others. Tremendous development is owed primarily to the growing sophistication in the models used to encode the light patterns and decode its output into 3D height maps of the surface of the object scanned.

A fringe projection system is composed of a camera and a projector, calibrated and placed apart, much like 2 camera arrangement of a stereo vision system. The projector is used to project pattern (usually periodic stripe pattern) of structured light onto the object, and the camera is used to record how the reflected light patterns fall on the object. The result is a deformed fringe image, bent according to the three-dimensional surface of the object. These deformed striped carry geometrical information about the height-map of the object’s surface. By using mathematical models to analyze the deformed striped, the reflected light is translated into 3D position cloud of points representation of the object scanned.

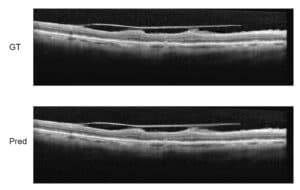

Fringe analysis techniques are used to interpret the phase distribution of the deformed fringe image. Ambiguities in the interpretation of the reflected light, caused by partial occlusion, can be resolved by projecting phase shifted patterns, or uniquely encoding the stripes by their color index (RGB), such that a unique stripe of colored pattern is used for the projection. The stripes pattern can also be moved across the object in the process of scanning. By considering the reflected light profile to be a Gaussian, sub-pixel resolution can be obtained by accurately localizing the peak of the distribution according to its analytical expression.

The process of assigning a phase shift between adjacent pixels is called phase unwrapping. This process is essentially performed to assign a phase shift value in the range of 0 to 2π. Errors in phase shift estimation are caused by properties of the object and lightning conditions such as reflections and shadows.

In the next stage, a correspondence between the 2D image pixels and 3D positions need to be resolved. These steps are performed by calibrating the intrinsic camera coordinates to those of real world 3D coordinates representing the height of the object’s surface. Standard methods for triangulation, such as the use of epipolar coordinate system can be used for the task.

The process of 3D object reconstruction by fringe projection has been widely used in the industry. However, many problems are yet to be completely solved and remain a bottleneck in the use of fringe projections. Examples of such problems include reconstruction of shiny objects and isolated objects. Some solutions are offered to resolve these problems, but tailor-made solutions still need to be routinely employed.

The pipeline for reconstruction demands careful monitoring by the algorithm development team. To meet demands for accuracy and speed for particular applications in the industry, challenges in computer vision must be overcome. The fringe reconstruction demands skills valuable for the choice of the right phase unwrapping algorithm, system calibration, and post processing (like texture mapping, constructing a multi-scanner system, etc.) to achieve optimal result in terms of both accuracy and speed. The expertise accumulated at RSIP Vision over the past 3 decades has generated cutting-edge operative algorithms for 3D object reconstruction in the industry and research. Please consult our projects page to learn how to harness the knowledge of RSIP Vision for the success of your project. You can also read the online magazine of the algorithm community.

Fringe analysis techniques are used to interpret the phase distribution of the deformed fringe image. Ambiguities in the interpretation of the reflected light, caused by partial occlusion, can be resolved by projecting phase shifted patterns, or uniquely encoding the stripes by their color index (RGB), such that a unique stripe of colored pattern is used for the projection. The stripes pattern can also be moved across the object in the process of scanning. By considering the reflected light profile to be a Gaussian, sub-pixel resolution can be obtained by accurately localizing the peak of the distribution according to its analytical expression.

The process of assigning a phase shift between adjacent pixels is called phase unwrapping. This process is essentially performed to assign a phase shift value in the range of 0 to 2π. Errors in phase shift estimation are caused by properties of the object and lightning conditions such as reflections and shadows.

In the next stage, a correspondence between the 2D image pixels and 3D positions need to be resolved. These steps are performed by calibrating the intrinsic camera coordinates to those of real world 3D coordinates representing the height of the object’s surface. Standard methods for triangulation, such as the use of epipolar coordinate system can be used for the task.

The process of 3D object reconstruction by fringe projection has been widely used in the industry. However, many problems are yet to be completely solved and remain a bottleneck in the use of fringe projections. Examples of such problems include reconstruction of shiny objects and isolated objects. Some solutions are offered to resolve these problems, but tailor-made solutions still need to be routinely employed.

The pipeline for reconstruction demands careful monitoring by the algorithm development team. To meet demands for accuracy and speed for particular applications in the industry, challenges in computer vision must be overcome. The fringe reconstruction demands skills valuable for the choice of the right phase unwrapping algorithm, system calibration, and post processing (like texture mapping, constructing a multi-scanner system, etc.) to achieve optimal result in terms of both accuracy and speed. The expertise accumulated at RSIP Vision over the past 3 decades has generated cutting-edge operative algorithms for 3D object reconstruction in the industry and research. Please consult our projects page to learn how to harness the knowledge of RSIP Vision for the success of your project. You can also read the online magazine of the algorithm community.