AI for BPH

BPH (Benign Prostatic Hyperplasia) is a common condition in aging men and can lead to lower urinary tract symptoms (LUTS). Recent developments in the field of deep learning (DL) and artificial intelligence (AI) can aid in BPH detection, classification and treatment.

Deep Learning and AI for BPH classification

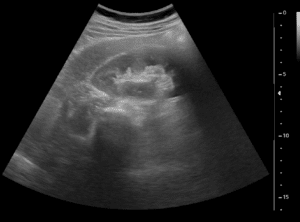

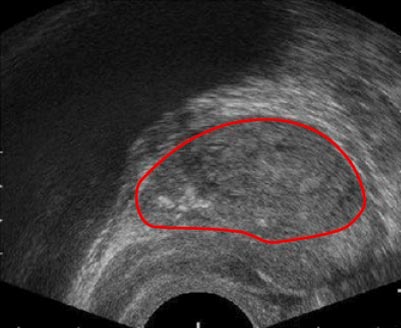

Accurate BPH severity assessment is essential for correct treatment selection – ruling out cancer by biopsy or standard BPH treatment. Analyzing ultrasound (US) and MRI images, which are the main modalities used for BPH detection, and using deep-learning segmentation tools to process them, gives a baseline for severity classification by the physician. Algorithms that are trained on ultrasound and MRI images of BPH can separate cases that require further diagnosis, acute treatment, or conservative measures, enabling a state-of-the-art BPH classification.

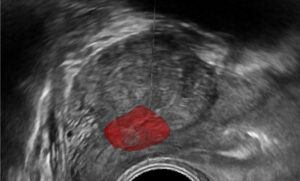

The ultrasound-guided biopsy itself can also benefit from this technology. Real-time reconstruction of the ultrasound 2D images to a 3D model of the prostate gland is feasible using advanced AI algorithms, allowing a spatial view of the gland and improving navigation. Furthermore, registration (or fusion) of the ultrasound image with a baseline MRI scan can provide the clinician with a “map” of the gland, while the real-time ultrasound images are accurately fused with it. This utilizes the superior resolution and quality of the MRI images, in contrast to the ultrasound images, allowing easier navigation, significantly shortens procedure length, and reduces complications.

Deep Learning and AI in BPH treatment

No treatment

Often the clinician would decide the BPH is not severe and that intervention is unnecessary. Follow-up scans are commonly performed in the upcoming months, and an accurate comparison to baseline scans is essential for optimal treatment decision. Registration on the new scan with baseline images, as well as outlining prostate edges will accentuate prostate growth and change over-time. Using deep learning algorithms and advanced AI allows quick and accurate registration and edge detection that are a perfect match for this task. Such algorithms are proven effective in various medical datasets, and BPH is no exception. Convolutional neural networks outperform other methodologies and produce a very accurate segmentation, which can be used for extracting numerical information that can assist during a clinician’s decision making process. Deep learning handles very well the special texture that can be seen in ultrasound images of the prostate, while classical algorithms often have trouble handling the variations in the different images.

Symptom relief

Minimal invasive procedures such as stent placement or urinary tract dilation are used to relieve BPH symptoms. Accurate placement of the dilation devices is critical for ideal relief. Real-time tracking, 3D image reconstruction, and fusion can all provide better guidance during this procedure. Rather than navigating using 2D narrow video angles from the laparoscopy camera, or the blurry Ultrasound images, the clinician can take advantage of the better-quality MRI images, and the depth dimension of a 3D model. Extracting information from the 3D data is a suitable task for advanced deep learning algorithms. Performing fusion of ultrasound and MRI is done using corresponding keypoint detection in both the ultrasound and MRI and then calculating a transform accordingly. It is often difficult to provide rules that distinguish useful features which might cause classical feature detectors to fail for certain cases. Fully convolutional architectures are used to provide a heat-map for candidate features.

Prostatectomy

Removal of the prostate gland is necessary in some cases. There are many methods to perform this in a minimally invasive manner – laser, waterjet, steam powered, or standard slicing. The common ground for all these methods is the necessity to remain within the prostate bounds and avoid injuring the surrounding tissues and organs. Accurate tracking of the ablation device, as well as a fusion of the guiding imaging modality with pre-op scans, can provide the operator with a visible border for the procedure and keep the procedure within boundaries at all times. This is done using live video feed analysis, registering images in real-time, and providing accurate positioning information, and so reducing the adverse event rate.

RSIP vision’s role in AI for BPH

The treatment of BPH needs to be minimal and precise while maintaining adequate measurements. Multimodal imaging techniques, and the abilities to use deep learning and AI to process them, can optimize BPH healthcare. RSIP Vision’s experience in medical image segmentation and registration, as well as in real-time tool tracking can be used to implement these algorithms specifically for use in the detection, classification and treatment of BPH and provide the basis for a new advanced set of tools and capabilities.

Urology

Urology